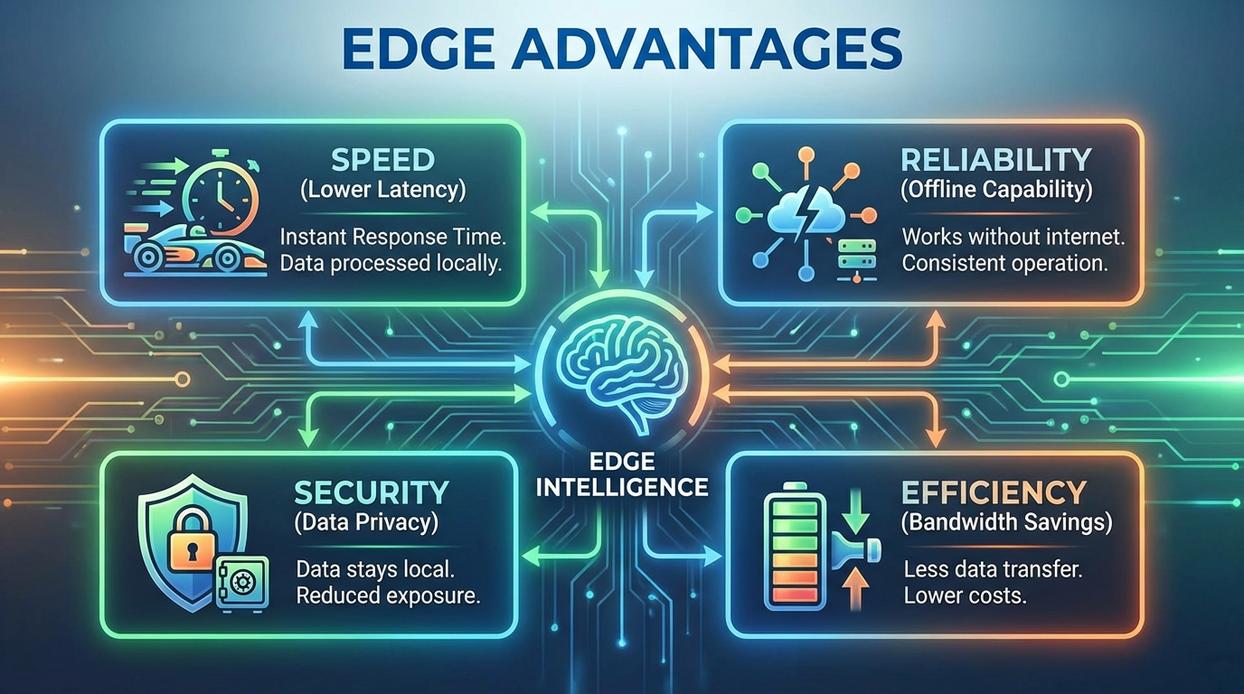

Cloud computing transformed how we store and process information—but today’s always-on, hyperconnected world is pushing it to its limits. As IoT devices multiply and real-time applications demand split-second decisions, sending every byte of data to distant servers creates latency, rising bandwidth costs, and avoidable security exposure. This article explores the tangible, system-level advantages of moving processing closer to where data is created. You’ll gain a clear understanding of edge computing benefits, from faster response times to improved reliability and stronger data control, and discover which high-performance use cases benefit most from a decentralized architecture.

Advantage 1: Slashing Latency for Real-Time Responsiveness

As we explore the rise of edge computing and its critical role in enhancing data processing capabilities, it’s also worth noting how upcoming releases, such as those detailed in our article on ‘Guides Release Dates Gamrawtek‘, are set to leverage this technology for innovative applications.

Latency is the delay between a command and a response—the digital equivalent of yelling into a canyon and waiting for the echo. In technical terms, it’s the time it takes for data to travel from a device to a server and back again. In centralized cloud systems, that round trip can stretch from milliseconds to full seconds depending on distance and congestion. For time-sensitive applications, that delay is the Achilles’ heel.

Here’s my take: if your system depends on split-second decisions, shipping data to a faraway data center is like mailing a letter when you should be making a phone call. Processing data locally on an edge device or gateway cuts out that journey entirely. The result? Latency drops from noticeable seconds to near-instant milliseconds.

Where does this really matter?

- Autonomous vehicles interpreting sensor data before hitting the brakes

- Industrial robots performing precision assembly without hesitation

- AR/VR systems syncing overlays with real-world movement seamlessly

Some argue cloud latency is “good enough.” I disagree. When safety, immersion, or mechanical accuracy is at stake, “good enough” isn’t good enough. This is where edge computing benefits become undeniable.

Advantage 2: Optimizing Bandwidth and Reducing Network Strain

The Data Deluge

Every second, modern systems generate staggering amounts of data. A single high-definition security camera can produce several gigabytes per day, while industrial IoT sensors stream constant temperature, pressure, and vibration readings. Smart city infrastructure—traffic cameras, environmental monitors, connected streetlights—adds even more to the flood. This explosion of information is often called a data deluge, meaning data volumes so large they strain traditional systems.

The Bottleneck Problem

However, sending all raw data to centralized cloud servers creates a serious bottleneck (think of a highway jammed at rush hour). Bandwidth—the maximum rate of data transfer across a network—gets consumed quickly. The result? Network congestion, slower response times, and rising operational costs. Some argue that cloud scaling solves this. While cloud platforms are powerful, continuously transmitting unfiltered data is expensive and inefficient.

How Edge Alleviates Strain

Instead, implement data filtering at the edge. Edge devices pre-process information locally, extracting meaningful insights and discarding noise. For example, a camera can transmit only motion-triggered clips rather than 24/7 footage. As a result, organizations dramatically reduce data transfer loads.

If you’re designing distributed systems, prioritize local analytics and selective syncing. This approach not only lowers costs but clearly demonstrates edge computing benefits in real-world deployments.

Advantage 3: Enhancing Security and Data Privacy

“Every time data crosses the public internet, you’re rolling the dice,” a security architect once told me. He wasn’t exaggerating. Transmitting sensitive information to distant cloud servers expands the attack surface—the total number of points where unauthorized users can try to access data. More hops mean more opportunities for interception (and hackers only need one).

Edge computing flips that script. By processing and storing data locally, organizations shrink exposure dramatically. Instead of shipping everything to centralized infrastructure, sensitive information stays closer to its source—less travel, fewer vulnerabilities.

Key security gains include:

- Reduced interception risk during transit

- Faster threat detection at the device level

- Greater operational control over critical systems

Keeping data local also supports compliance with regulations like GDPR and HIPAA, which impose strict data residency and sovereignty rules (European Commission, 2016; HHS, 1996).

For deeper context, explore cybersecurity shifts emerging threats and defenses to watch.

That’s one of the clearest edge computing benefits security teams point to today.

Ensuring operational reliability means planning for the moment the internet drops. In remote oil rigs, wide farmland, cargo ships, or moving vehicles, connectivity is often intermittent at best. A cloud-only model assumes constant access. When that link fails, dashboards freeze, automated controls stall, and teams are left waiting for a spinning loading icon (we’ve all been there).

Compare the options:

- Cloud-dependent systems halt operations when connectivity disappears, creating downtime and revenue loss.

- Edge-enabled systems continue running locally, processing data and executing rules without outside support.

This autonomous operation is one of the core edge computing benefits. Edge nodes—localized devices that handle computation near the source of data—store information on-site and sync it back to the central cloud once connectivity returns. Critics argue cloud centralization simplifies management, and they’re right about oversight. But resilience matters more when safety and uptime are nonnegotiable. Pro tip: design failover paths early. Always.

Upfront hardware costs can feel intimidating at first. However, in my view, focusing only on day-one expenses misses the bigger financial story. Over time, edge deployments steadily trim operational waste. That is where edge computing benefits become undeniable. As data volumes grow, cloud fees compound like a Netflix subscription you forgot to cancel. Specifically, organizations save through:

- Lower cloud data ingress and egress fees

- Reduced long-term storage requirements

- Decreased network bandwidth spending

Moreover, these efficiencies scale with every new device added. In my opinion, that compounding effect is the real strategic advantage. And the math only improves with scale dramatically.

Making the Shift: When to Move Your Systems to the Edge

Modern applications demand instant responses, continuous uptime, and stronger data control. That’s exactly where edge computing benefits stand out—delivering speed, reliability, and security by processing data closer to where it’s created. If you’re struggling with lag, bandwidth costs, or performance slowdowns, it’s a clear sign that centralized cloud infrastructure alone may no longer be enough. Real-time environments simply can’t afford delay.

Now is the time to evaluate your systems. Identify latency bottlenecks, rising data transfer expenses, and mission-critical workflows. Pinpoint where an edge strategy can reduce friction, improve performance, and give your operations the responsiveness they require.